How do you learn to trust the machine? Introducing the Enterprise Context Store.

Jonas De Keuster

·

"We're going to use AI agents to build and maintain our data platform."

A lot of data teams are now seriously considering exactly that: automating modeling, code generation, contracts, semantic layers. But there is a step that almost always gets underestimated, and it is the one that determines whether the whole thing works.

You can run an agent today to execute a data management task. It will produce flawless code. The question is whether it will build what you actually want, and for most teams that uncertainty is the real blocker.

Ask a general-purpose agent to build a star schema for your order management domain. It knows what a star schema is and has read everything ever written about them, so it will improvise on whatever build strategies are publicly available and apply them to the input you gave it. It produces something technically correct: fact tables, dimensions, grain defined. But it does not know that your "customer" entity means something different in your ERP than in your CRM, that your order lines carry a status field that has been redefined three times since the original implementation, or that your finance team already made a governance decision about how revenue is attributed. The output looks right. It might be deeply wrong.

Scale that to a real platform build and the problem compounds. A real platform build means dozens of agents running in parallel, each handling a different domain or layer, each improvising from the same generic public knowledge and arriving at their own interpretation of your domain. The result is a set of outputs that each make sense on their own but break down where they meet, with no shared understanding holding the platform together.

The instinctive fix is to feed each agent everything you have and hope it figures out what matters: more documents, more schema dumps, more prompt engineering. Some teams go deep on this. Individual engineers become experts at figuring out how to get the right output from a model, tokenmaxxing their way through builds and signalling productivity. But every build starts from scratch. The context each engineer feeds in reflects their own interpretation of what is needed, and nothing they learn compounds into something the next person can build on. Call it the localhost problem: one engineer, a browser tab full of AI tools, a conceptual modeler running on port 8503, and a mental model of how it all fits together that lives nowhere except in their head. It works. But it creates two problems at once: the engineer knows how they got to the result (the prompts, the tricks, the sequence that finally produced something usable) while nobody, including them, fully understands the code that came out. When that person leaves, you lose both.

Data engineering has always had a key-man risk problem. The person who built the pipelines is also the person who understands them. The promise of AI was that it should reduce that dependency, letting teams generate, iterate, and document faster than any individual could. With the localhost approach, the key person now also owns the prompts, the local tooling, and the interpretation layer on top. The knowledge is more scattered and harder to transfer than it was before.

The Enterprise Context Store

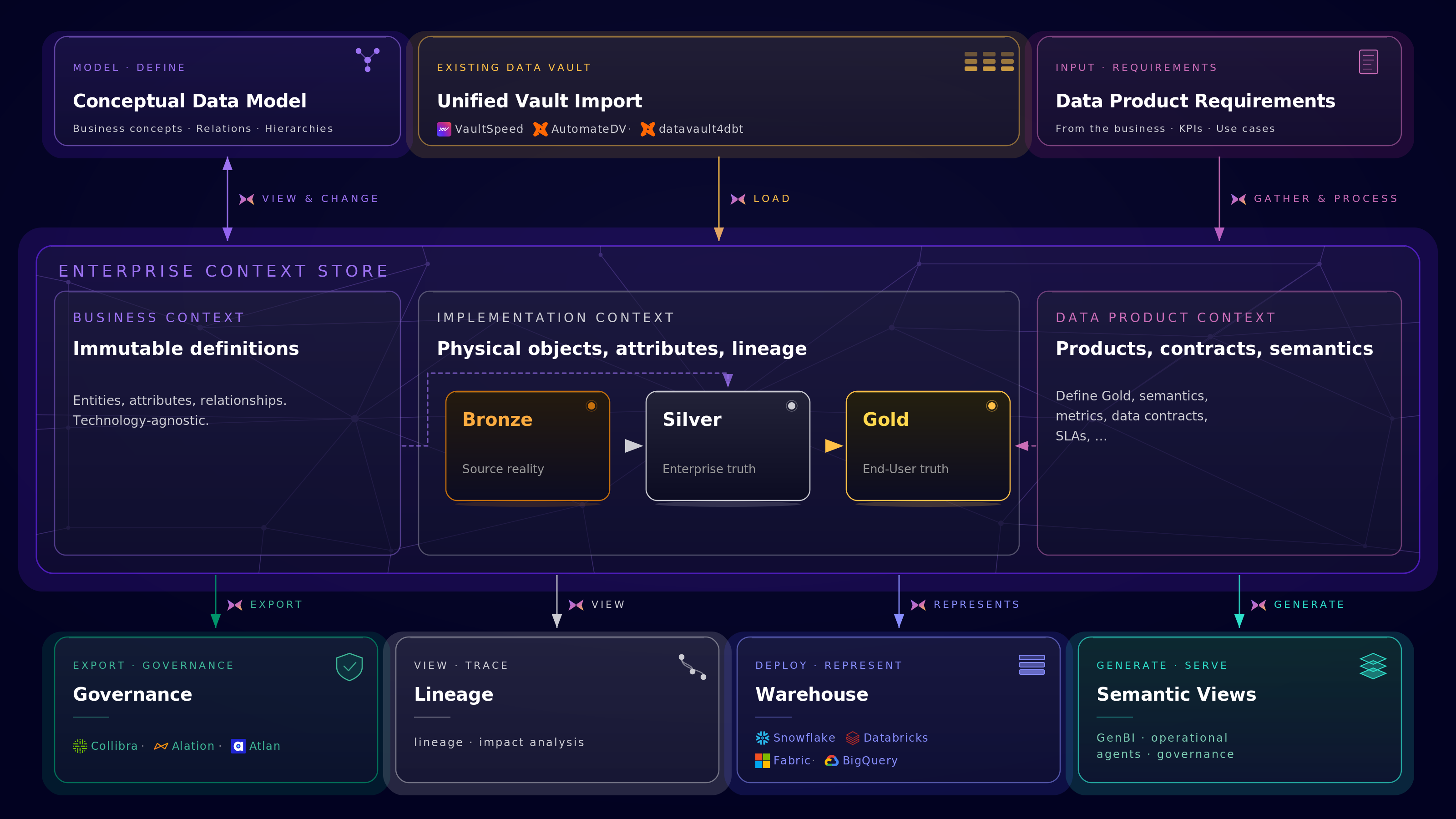

The pattern we keep coming back to is what we call the Enterprise Context Store: a structured, machine-readable foundation that holds everything an agent needs to act correctly on your data platform, built so that knowledge belongs to the organisation rather than to any individual.

VaultSpeed has spent more than ten years building a deterministic code generation engine that encodes enterprise Data Vault patterns. Before agents existed, we were already doing the hard part: taking metadata from source systems, aligning it to a business model, and generating production-grade transformation logic across Snowflake, Databricks, Fabric, and BigQuery at scale. The ECS is the next layer on top of that foundation. Where a decade of vault automation meets the agent era.

In practice it replaces what most teams currently rely on: a data dictionary that nobody keeps up to date, a pile of documentation that does not connect to the actual implementation, or a graph database you adopted for a specific use case and then left running on the side.

It has three layers. The business context layer holds the immutable definitions of your domain: entities, attributes, relationships, technology-agnostic, stable across platform migrations and restructures. This is the layer that encodes what your business actually means by "customer" or "order", independent of what any source system says. The implementation context layer tracks the physical reality of your platform across Bronze, Silver, and Gold, with full lineage between them. The data product context layer captures what the business asked for: product definitions, data contracts, SLAs, semantic mappings, and the KPIs that need to be derivable from the foundation below.

The ECS also does not start empty. VaultSpeed has processed the metadata from hundreds of enterprise deployments across financial services, insurance, manufacturing, retail, life sciences, higher education, and telecom. Encoded in that history are the design patterns, the edge cases, and the resolutions that work: when a multi-active satellite is the right answer and when it is a symptom of a modeling problem, how to handle same-as links for identity resolution across source systems, how to structure hub groups for master data taxonomies. These are hard patterns to get right, especially if your team does not have deep Data Vault experience. That accumulated knowledge ships as part of the store from day one.

Enterprise truth and end-user truth

The business context in the ECS drives the design of the enterprise truth: the integrated, governance-aligned foundation that everything else is derived from. It needs to be deterministic, repeatable, and auditable, independent of any individual's prompting style or tool preferences. Data Vault is the natural pattern here for environments with horizontally distributed data across many source systems, but the principle holds regardless of the pattern you use. This foundation compounds value only if it is built with discipline, and discipline requires structure that survives individual contributors.

The end-user truth is where the context shifts to business outcomes. Data products are shaped for specific needs, they change with those needs, and the agent's job is to map requirements against what the enterprise truth already provides. Because the entity definitions, governance rules, and lineage are already encoded, the agent is working from a solid base rather than interpreting the domain from scratch. Skills keep agents in line across both: every action is scoped to a well-defined interface, so the two foundations stay connected and coherent as the platform grows.

Agents interact with the store only through purpose-built skills, each with a well-defined interface. That constraint keeps the store coherent, and it is also what makes the system genuinely trustworthy. When every agent action is scoped to a skill, the approval surface stays manageable. You are reviewing a proposal from a system that already knows your domain, your governance rules, and your naming conventions. If context is missing, the skill surfaces the gap before the agent proceeds rather than after the output turns out wrong.

The compounding effect

Every decision made through the ECS compounds into the store and becomes available to every subsequent build. The first data product you build takes longer because you are encoding the domain: aligning source metadata to your business model, capturing governance decisions, defining what "customer" means across your ERPs and CRMs. Skills guide you through that early work, surfacing the right context and flagging gaps as you go, so the store gains density faster than it would if engineers were working from scratch. The tenth data product takes a fraction of the time because the knowledge graph already knows your entity definitions, your lineage rules, and your naming conventions.

Through well-defined skills, the ECS also connects to all the tools your team already uses: your conceptual modeling process, your data product requirements gathering, your governance catalogues like Collibra or Alation for lineage publishing and policy enforcement, your data platforms like Snowflake or Databricks for deployment, and your transformation layer whether that runs on dbt or plain SQL. Governance tools catalogue what you have. SQL and dbt execute what you build. Neither connects business definitions to implementation to data products. The ECS holds that shared context, and skills are the interface through which each tool reads from and contributes back to it.

With the ECS in place, the knowledge that used to walk out the door stays in the platform. A new team member onboarding gets far more shared context than they would in a localhost-style setup: the domain definitions, governance decisions, and design patterns are already encoded. Think about what that means when you need to ingest a new source system and the engineer who set up the last three integrations is no longer around. The ECS does not replace domain expertise, but it makes that expertise available to everyone who comes after.

Well-scoped skills mean smaller models handle routine work reliably, without paying frontier-model prices for every build step. Early builds are slower because the store is sparse. Later builds are faster and more accurate because the domain knowledge is denser. That is what a compounding platform looks like. That is how you learn to trust the machine.

VaultSpeed's agentic platform is now in early access. If your organisation has an existing data vault investment and wants to explore what the ECS could deliver, we would welcome the conversation.